A Race to Identify Twitter Bots

The growing popularity of social media raises all sorts of questions about online security. According to a recent Twitter SEC filing, approximately 8.5 percent of all users on Twitter are bots, or fake accounts used to produce automated posts. While some of these accounts have commercial purposes, others are “influence bots” used to generate opinions about a certain topic.

Concerned by the future potential of fake social media accounts, DARPA’s Social Media in Strategic Communication (SMISC) program held a four-week challenge this February, where several teams competed to identify a set of influence bots on Twitter. A USC team led by Aram Galstyan, an associate professor at the USC Viterbi Department of Computer Science, won first place for accuracy and second place for timing.

For the DARPA competition, Galstyan’s team created a bot detection method that he said has proven 100 percent accurate. The overall process can be divided into three steps: initial bot detection, clustering and classification.

For the DARPA competition, Galstyan’s team created a bot detection method that he said has proven 100 percent accurate. The overall process can be divided into three steps: initial bot detection, clustering and classification.

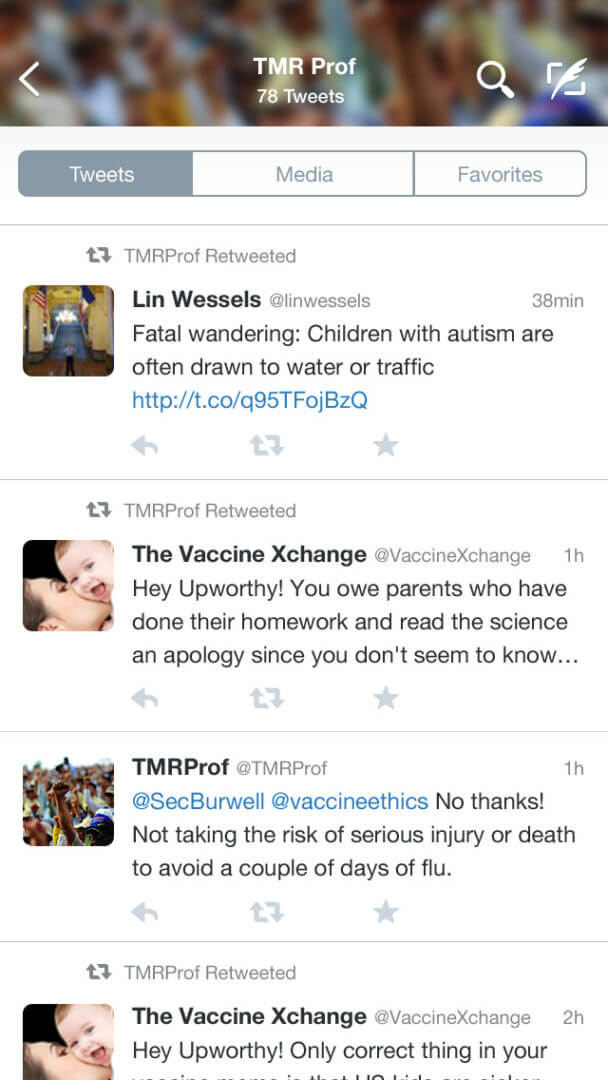

During initial bot detection, the team uses linguistics, behavior and inconsistencies as parameters to uncover a first set of bots. These cues include unusual grammar, number of tweets posted and stock pictures used as profile photos.

The second step focuses on identifying the accounts to which the first set of bots is linked. Studies suggest that most bot developers create clusters in which bots are connected to each other to increase retweets.

Finally, once a certain number of bots has been found, algorithms can be used to classify them.

While bot detection methods have become more accurate, bot creators are also enhancing their programming skills. The number of influence bots, as well as their degree of sophistication, will likely increase in the future, Galstyan said. We can expect new sets of more complex bots to engage in advertising activities and political influencing.